Abstract: In this post we look at the classic example for analyzing games, the Prisoners Dilemma, specifically in the context of Cosmic Encounter. We then look at the various strategies from the “iterated” version of the Prisoners Dilemma.

Perhaps one of the most impactful analog games of all time, Cosmic Encounter is a game for 3-8 players. In Cosmic Encounter, players take on the role of Aliens, competing in a game of galactic negotiation. The game is known for being a pioneer of asymmetric design, and has been reprinted multiple times since its initial release in 1977.

As mentioned above, players in Cosmic Encounter are playing a game of politics, of negotiation. The main gameplay loop, is set around the “encounter”. In an encounter 2 players participate in a game of negotiation hoping to expand their race’s reach. It is this mechanic that we will attempt to analyze and explore. I will give a brief and simplified overview of this mechanic here, but for the full explanation see the rules.

During an encounter, the attacking side sends a number of ships to attack a planet. Players then negotiate with the other players in the game, asking for them to join the encounter and help win. Other players may choose the send ships to either side in which they are invited. Then each player selects one “encounter” card at their hand and plays it face down. There are two types of encounter cards, “Attack” and “Negotiate”. Attack cards add to the total of ships you have in the encounter, while a Negotiate card attempts to start a negotiation. In order for negotiations to begin, both players must select a negotiation, however in the case that one player plays a negotiate and the other players plays an attack, the attack player instantly wins. Generally speaking, the outcome of a negotiation is

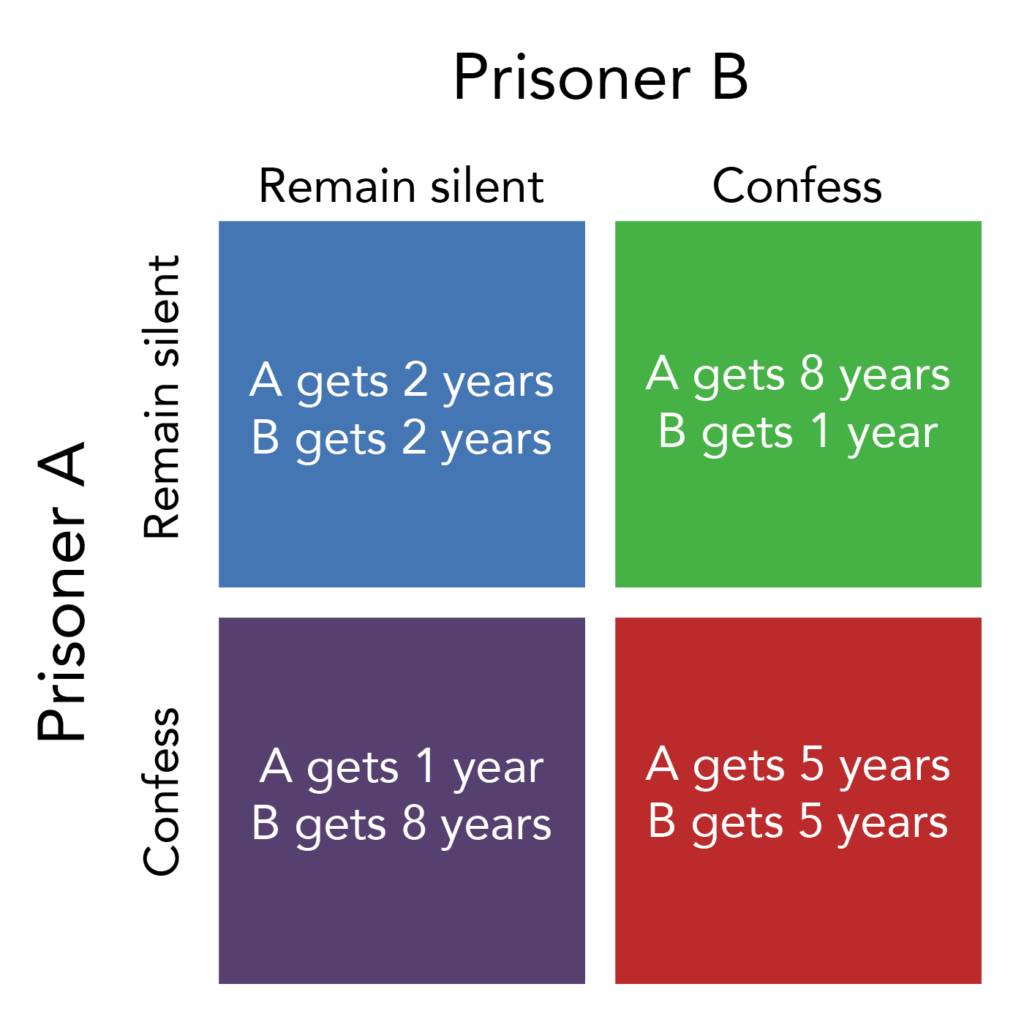

While this analyze this “meta-game” would easily become very complex due to all of the possible outcomes, we can abstract this game to one that is very simple, specifically the prisoner’s dilemma. For those who haven’t seen the prisoners’ dilemma before, the game is a classic example of why two rational individuals might not cooperate, even if it is in their best interest two. A graphical example of the game can be seen below. The game is classically stated in the following way:

“Two members of a criminal organization are arrested and imprisoned. Each prisoner is in solitary confinement with no means of communicating with the other. The prosecutors lack sufficient evidence to convict the pair on the principal charge, but they have enough to convict both on a lesser charge. Simultaneously, the prosecutors offer each prisoner a bargain. Each prisoner is given the opportunity either to betray the other by confessing that the other committed the crime, or to cooperate with the other by remaining silent.

The outcomes of this game can be seen by the figure up above. Note that for each player, no matter what the other player has chosen to do, it is in their best interest to confess. Thus, we can say that it is expected for players to both confess and betray their partner. Because confessing is always better, we can say that confessing is a dominant strategy and that both players (assuming they act in their best interest) will confess. Notice, however, that doing so results in a worse outcome for both players, than if they had chosen to remain silent. This will observation will be important later on.

Next, notice that the encounter system in Cosmic Encounter is, more or less, an instance of the prisoners dilemma. If both players choose the negotiate, they generally receive a slightly positive outcome. If one player chooses to negotiate, while the other attacks, the attack player receives a very good outcome (a free win) while the other player receives a terrible outcome. The only case that doesn’t seem to initially fit is the case in which both players attack. However, if one where to simulate this situation many times, the payouts, assuming all other things are equal, would result in a net payout for each player of 0. So, we will assume that this situation ends up with a total payout of 0, which is better than the negotiate/attack situation, thus players are incentivized to attack.

Finally, notice that in the context of a game, the players will play the game multiple times. We call this version, the iterated prisoners’ dilemma. So, what is the optimal strategy? Well, if we know the game will only be played a certain amount of times, we can prove that the optimal strategy is always to just defect. The proof is simple backswords induction. If we known the game ends on a specific iteration, we might as well confess on the last round, as the other player can’t retaliate. However, the other player will also do the same, thus it makes since to retaliate on the next iteration. We can use the logic for all of the iterations back to the first iteration, thus its best to always defect.

Luckily for us, however, we don’t have a hard limit on the number of encounters in Cosmic Encounter, thus the same logic doesn’t apply. However, when using standard economic models and definitions, the answer is still always the same – confess.

One of the first strategies a player may use is the “punishment” strategy. This strategy works in the following way. First, start off by remaining silent, and keep silent until the other player chooses to confess. Then once they have chosen to confess, keep choosing to confess. This strategy works because it is in the other players best interest to keep cooperating, as choosing to confess will result in them ending up at the confess/confess equilibrium. However, that is not to say that the strategy doesn’t have its downsides, specifically it doesn’t have the option to “forgive”. Moreover, if the non punishment player wants to switch to cooperating, realizing that it will be better for them, they are unable to. Thus we need to change our strategy slightly.

The second strategy we will discuss is the “tit for tat” strategy. This strategy works by cooperating on the first iteration and then using the opponents previous strategy on the current iteration. Much like the punishment strategy, this strategy incentivizes the opposing player to cooperate with them, as not doing so will result in a retaliation. It also doesn’t fall into the same trap as the punishment strategy as if the other player decides it would like to cooperate, it now can (after taking a bad iteration as punishment.)

In fact, this strategy is perhaps the best of all the commonly implemented strategies. In Robert Axelrod’s book The Evolution of Cooperation, Axelrod ran an experiment with a number of different predetermined strategies and tit for tat ended up being the best, largely for the reasons described above. In terms of validating those results, wait for the next post!

With all of that being said, we can thus conclude that using the tit for tat strategy is the best approach for deciding whether to negotiate or attack!